Once set, an online reviewing doesn’t cost anything and processing the data is relatively easy compared to other research methods such as data analytics, customer interviewing, usability testing, support tickets or staff reporting.

However, because we don’t communicate with customers directly, it’s quite hard to avoid data distortion. Here are the 3 main biases that you should be aware of while designing your own online reviewing system.

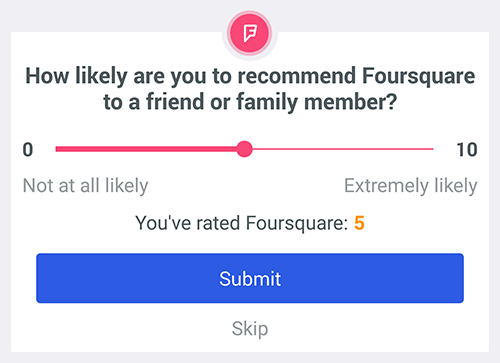

Net Promoter Score became one of the most popular metrics to rate the customer experience

Bipolar review distribution

Emotions drive people into action. The majority of customers review when they either love or hate the product, service or experience. If they have moderate views, they simply don’t care enough to go that extra mile and leave a review.

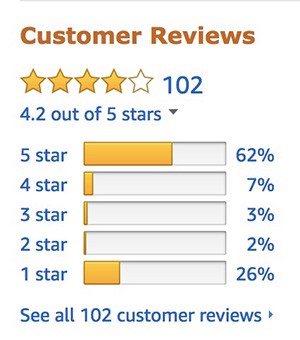

This creates a bipolar distribution of online reviews with the majority of them on the two ends of the scale; a phenomenon well observed by many big reviewing players such as Amazon or Yelp.

Amazon often struggles with bipolar review distribution. For instance in case of the new iPhone X, only 12% of reviews are between 1 and 5 stars.

To get feedback even from less excited users, you should proactively ask for reviews at the moment their emotions are the strongest; preferably right after the delivery.

Uber, for instance, pushes a notification requiring feedback right after the ride ends. Aliexpress first asks customers to confirm the delivery of product through email confirmation and then asks them to rate the experience (including both, an actual product and its delivery).

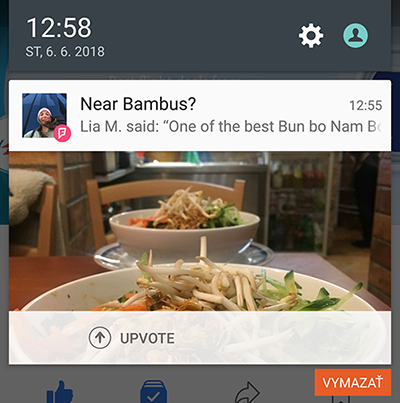

Foursquare tracks users by GPS and sends notification about restaurant they are likely sitting in.

Another way of getting more people to review is by using a social incentive. Instead of a shallow call-to-action like “Rate your experience”, why not ask something like “Help us to improve our services for people like you”? At the end of the day, everyone wants to make this world a better place.

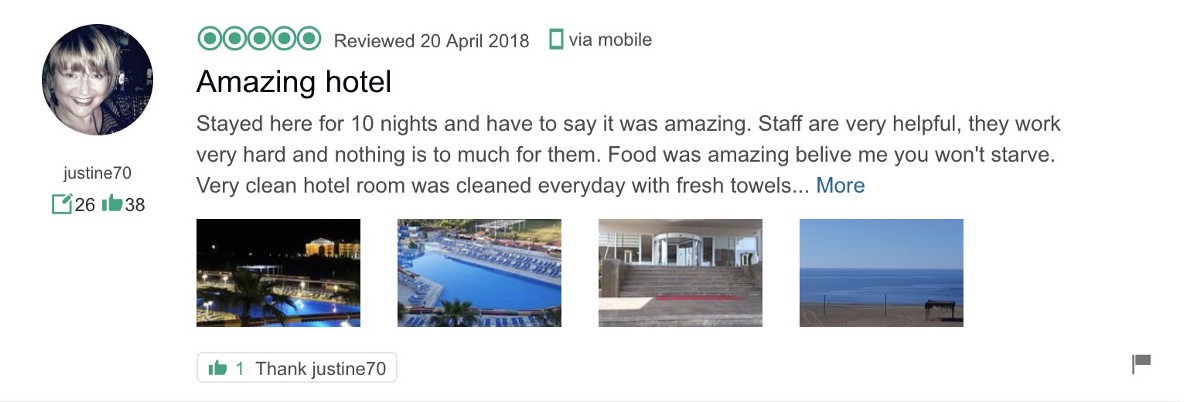

Some services even gamificate the reviewing process. Tripadvisor promotes reviewers into several levels on the basis of how many reviews they wrote and how many likes they received for them. This is a great way of making more loyal reviewers on, let’s say, e-commerce websites with a wide product portfolio.

Lastly, it pays off to filter fraudulent and low quality reviews. This way, Yelp claims it improved the balance of review distribution significantly. If reviews are public, some websites also have an opportunity to like or dislike every single of them (like in case of Tripadvisor), which helps to determine their relevance.

Tripadvisor – each user has the number of reviews and likes displayed right under his name.

Halo effect

A well known Halo effect occurs when people evaluate the whole experience based on just one highly emotional aspect; whether positive or negative. But as we already know, most people leave reviews when they are emotional. So the key question is — how to lower the impact of Halo effect?

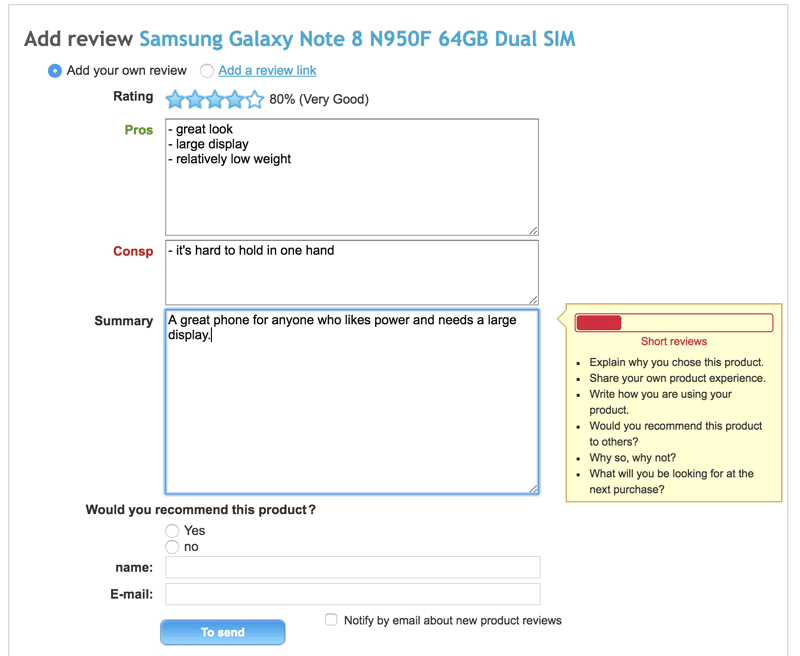

One way is to ask for both — pros and cons of the experience. This will make people think about good things even though they are upset because of the bad ones and vice versa. A good example is reviewing products at Heureka.sk, which even displays hints to advice the reviewer what aspects to focus on.

Each field has its own hint to advice the reviewer what to write about.

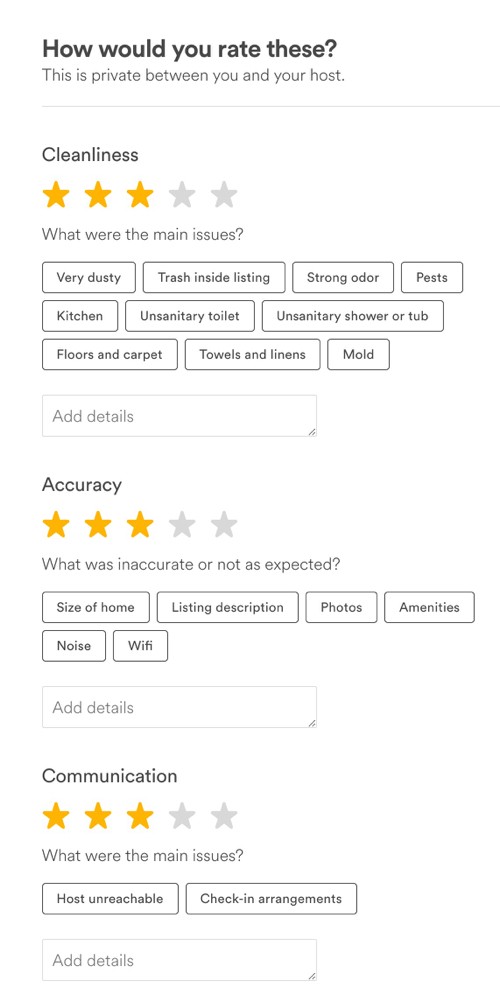

Another way is to ask people to review different aspects separately. This way you can receive more structured data that allows you to make the overall rating more objective. What’s more, you can quantify different aspects of the service which might be helpful for some customers who care about a certain aspect more than another.

For instance, one traveler might prioritise the location of the accommodation while another might consider spacious rooms to be the main criteria. The multi-aspect reviewing is used especially in case of more complex services such as travel (Uber), accommodation (Airbnb, Booking) or gastronomy (Tripadvisor, Foursquare).

The problem is that the more you ask, the less people will answer, so you need to find a compromise between quality and simplicity. A great example is Airbnb, which first sends the customer a simple email asking “What was your experience like?” on a five-star rating scale. After the customer rates the overall experience, he is redirected to the Airbnb website where he receives more questions in several steps. Each steps is a little bit more demanding but the reviewer can skip questions that he doesn’t want to fill at the moment.

Airbnb

Fear of personal evaluation

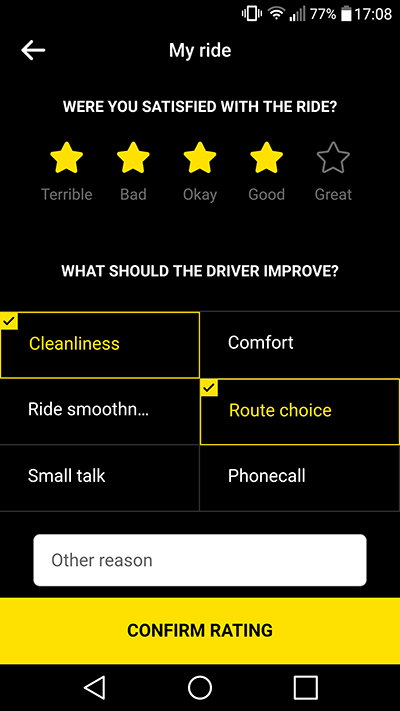

We first observed this bias when we did customer research for a taxi marketplace app — Hopin. People were leaving 5-star reviews even when they weren’t fully satisfied because they didn’t want to get the driver into trouble. “Everyone can have a bad day, I don’t want the driver to lose a job because of me” said one of the respondents.

To avoid this, it helps to soften the questions and make them more about the service, not the people behind it. Instead of “Rate the driver”, we asked “Were you satisfied with the ride?”. Instead of “What was bad?”, we asked “What could be better?”. Instead of telling people to write reviews, we simply gave them several options they can highlight to specify what was good or bad. Interestingly, the number of received feedback doubled after the redesign even though we asked for more data than before.

What can’t be measured, can’t be managed

Online reviewing represent a powerful research method that can be used to evaluate the customer experience on many channels. And with rating systems such as the standard 5-star scale or the Net Promoter Score, it’s easy to quantify these data and watch their performance across time.

Online reviewing is just one of many research methods but because of its simplicity and efficiency, it would be shame not to use it. If you'd like to receive more articles like this, sign up to our newsletter below.